LLM RAG

Anthropic Claude API: A Practical Guide

Dive into the Claude API – learn to integrate Anthropic’s Claude models into your apps: discover how Claude and Obot can help you build agents, handle prompts, and scale real-world workflows.

Top 10 RAG Tutorials in 2024 + Bonus LangChain Tutorial

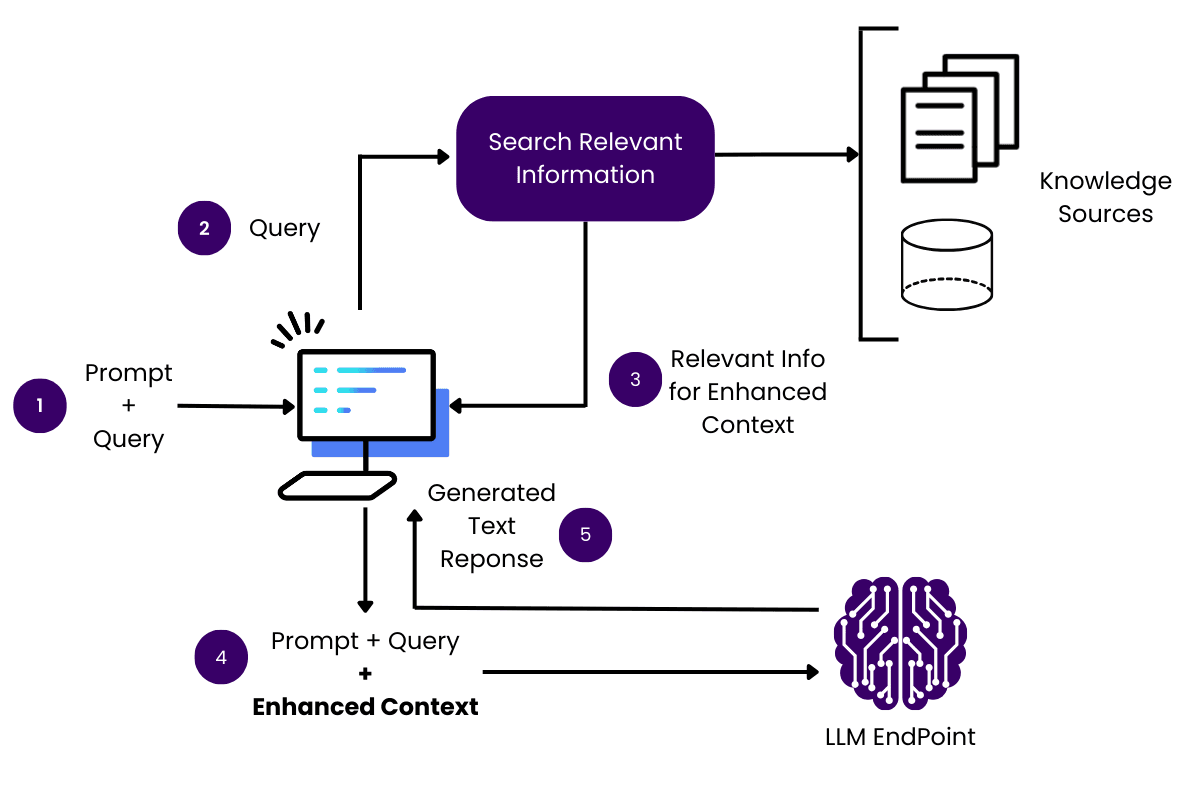

What Is Retrieval Augmented Generation (RAG)? Retrieval-augmented generation (RAG) combines large language models (LLMs) with external knowledge retrieval. Traditional LLMs generate responses based solely on pre-trained data. With RAG, the model can access updated and specific information at the time of inference, providing more accurate and context-rich responses. This method leverages repositories of external data, […]

RAG vs. LLM Fine-Tuning: 4 Key Differences and How to Choose

What Is RAG (Retrieval-Augmented Generation)? Retrieval-Augmented Generation (RAG) merges large language models (LLMs), typically based on the Transformer deep learning architecture, with retrieval systems to enhance the model’s output quality. RAG operates by fetching relevant information from large collections of texts (e.g., Wikipedia, a search engine index, or a proprietary dataset) and fuses this external […]

Understanding RAG: 6 Steps of Retrieval Augmented Generation (RAG)

What Is Retrieval Augmented Generation (RAG)? Retrieval Augmented Generation (RAG) is a machine learning technique that combines the power of retrieval-based methods with generative models. It is particularly used in Natural Language Processing (NLP) to enhance the capabilities of large language models (LLMs). RAG works by fetching relevant documents or data snippets in response to […]