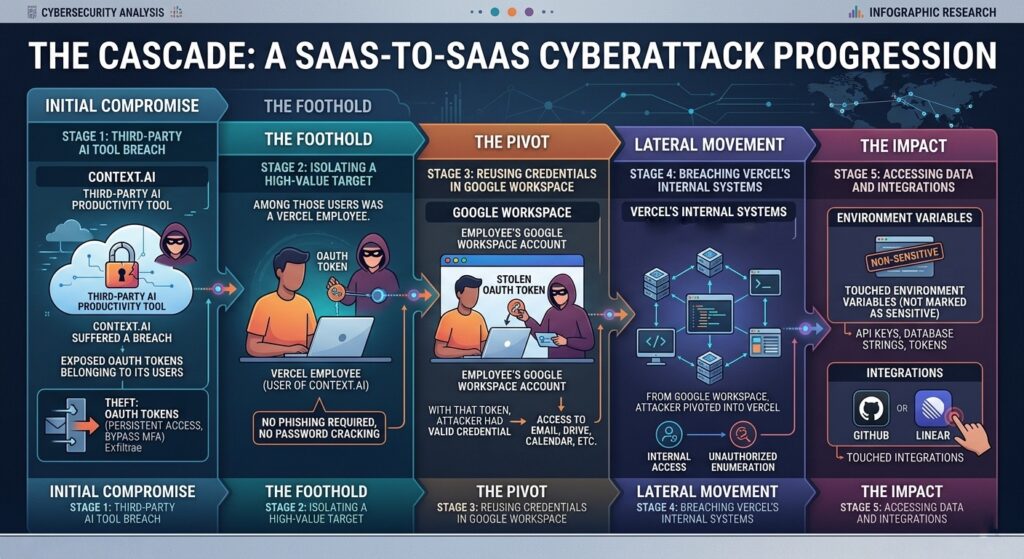

MCP security earned a concrete case study on April 19, 2026, when Vercel published a security bulletin confirming unauthorized access to internal systems. A third-party AI productivity tool was breached, its OAuth tokens were exposed, and an attacker used those credentials to walk through an employee’s Google Workspace account into Vercel’s internal environment. The reported exposure extended to environment variables, GitHub integrations, Linear, and potentially npm tokens tied to some of the most widely deployed JavaScript frameworks in production today.

The incident is instructive precisely because Vercel’s own systems held. The vulnerability was in the trust boundary between Vercel and a tool its employees used, a boundary that exists in every organization that has connected AI productivity tooling to its identity layer via OAuth. For engineering and security leaders accelerating AI adoption, the Vercel breach is the clearest real-world demonstration yet of what happens when those connections accumulate without centralized governance.

The Attack Chain: How One AI Tool Compromised an Entire Platform

According to Vercel’s official security bulletin, published April 19, 2026, attackers gained unauthorized access to certain internal Vercel systems. Reporting from Cryptopolitan fills in the mechanism: the intrusion originated from a third-party AI tool that had been granted Google Workspace OAuth access. The attacker didn’t break down Vercel’s front door. They walked through a side entrance a productivity tool had left propped open.

How the Chain Unfolded

The sequence follows a pattern supply-chain security researchers have warned about for years.

Context.ai, a third-party AI productivity tool, suffered a breach that exposed OAuth tokens belonging to its users. Among those users was a Vercel employee. With that token in hand, the attacker had a valid credential for the employee’s Google Workspace account, no phishing required, no password cracking. From Google Workspace, the attacker pivoted into Vercel’s internal systems, reportedly accessing environment variables that had not been marked as sensitive, and touching integrations including GitHub and Linear. Vercel’s own post-incident guidance points directly at this exposure vector, recommending that customers review their environment variables and use the sensitive environment variable feature going forward.

A Trust Boundary Failure, Not an Engineering Failure

Vercel’s core infrastructure held. The breach exploited the trust boundary between Vercel and the third-party tools its employees use, the same boundary present in every organization that has connected AI productivity tools to core identity systems via OAuth.

This is where MCP security becomes a concrete operational concern rather than an abstract threat model. The blast radius was shaped by permissions granted months or years earlier, when the risk calculus looked different. Any one of the dozens of third-party tools connected to employee accounts can serve as a viable entry point. One of them did.

Blast Radius: From One OAuth Token to the npm Ecosystem

Vercel is the infrastructure layer beneath Next.js and Turbo, frameworks embedded in production deployments across every industry. When a threat actor reportedly obtains GitHub and npm tokens associated with that kind of organization, the downstream exposure isn’t theoretical. According to reporting on the incident, the attacker claims to have obtained access keys, API keys, GitHub and npm tokens, source code, and internal deployment details. Confirmed internal access combined with integration-level reach into GitHub and Linear makes major downstream impact probable.

MCP Security and the Pattern Underneath

The LiteLLM case makes the structural pattern legible. Mercor described itself as “one of thousands” of organizations affected by a supply chain attack routed through a single dependency. Thousands of distinct organizations, each with their own security programs, each breached via a shared upstream component they didn’t directly control. The Vercel incident follows the same shape: a breach of one tooling layer propagates risk outward along the dependency graph, reaching organizations that had no direct involvement in the original compromise.

Ainvest’s analysis frames the Linear and GitHub integration exposure as a supply chain risk, not simply a Vercel internal incident. Any project using those integrations faces a potential chain of compromise. That’s not catastrophizing. It’s reading the architecture like a map.

AI tools and MCP-connected integrations have introduced a new class of trust boundary into enterprise environments, one that often carries privileged access to code repositories, deployment pipelines, and secrets storage. When those boundaries fail in a third-party tool, the blast radius is shaped by permissions granted at connection time, not by any decision the downstream organization made the day of the breach.

Your AI Tools Are a Trust Boundary

Count the AI tools your team uses. Coding assistants, AI-powered project managers, meeting summarizers, workspace suites. Each one almost certainly requested OAuth access during setup, a permission grant that felt routine at the time and has been quietly accumulating privilege ever since. Those grants don’t expire when the risk calculus changes. They don’t scope down when an employee changes roles. They persist, and they compound.

MCP Security Starts at the Connection Layer

Most AI tools are well-built and run by teams with legitimate security programs. The problem is that a breach anywhere in that dependency chain converts a trusted OAuth grant into an attacker credential, and the blast radius is determined by what was permitted at connection time, not by anything your security team controls on the day of the incident.

The architecture question the Vercel breach makes impossible to defer: do your AI tool integrations represent isolated, audited connections with defined scopes, or a collection of persistent, broadly-permissioned grants no one has reviewed since they were created? For most organizations, it’s the latter. Broad OAuth grants, no centralized inventory, no automated revocation, no audit trail connecting tool access to actual operational need.

An MCP gateway changes the shape of that exposure. Rather than each AI tool holding its own persistent OAuth grant to Google Workspace or GitHub, connections route through a governed control plane that enforces fine-grained, just-in-time access. When a tool is compromised, there’s a single revocation point. When an integration reaches beyond its defined scope, there’s a policy to enforce and a log to review. Obot MCP Gateway is built around exactly this model: centralized visibility into what every AI tool can reach, with least-privilege enforcement and the ability to pull the thread on any integration instantly.

The tools your team uses don’t have to change. The trust model underneath them does.

What Organizations Should Do Right Now and Structurally

Immediate Steps: Follow the Remediation Baseline

If your organization runs workloads on Vercel, start with Vercel’s own guidance.

- The April 2026 security bulletin recommends reviewing environment variables and enabling the sensitive environment variable feature for anything that shouldn’t be readable in plaintext.

- Go further: Cloaked’s post-incident analysis recommends rotating GitHub personal access tokens, fine-grained tokens, and deploy keys, with priority on anything that can publish or deploy code.

- Do that rotation at the source, not just inside Vercel.

Rotate first, investigate second. Once credentials are no longer live, pull recent deployment logs and look for anomalies in timing, origin, or scope. If you use Linear or GitHub integrations with Vercel, treat those connections as potentially touched until you can confirm otherwise.

The broader action cuts across every AI tool in your environment. Run an inventory of OAuth grants tied to employee accounts. Productivity assistants, coding tools, AI-powered project managers: each one likely holds permissions scoped at setup and never revisited. That audit is unpleasant. The alternative is discovering your exposure the way Vercel did.

The Structural Response: Centralized Control Over AI Tool Access

Reactive remediation solves the immediate problem. It doesn’t address the architecture that created it.

As AI agents and MCP-connected tooling proliferate across enterprise environments, each new integration is a potential entry point. Individual employees granting OAuth access to individual tools, without centralized visibility or policy enforcement, produces a permission surface no security team can audit manually.

The structural answer is governance at the connection layer. AI tool integrations touching code repositories, deployment pipelines, or secrets management should route through a centralized control plane rather than accumulating direct OAuth grants. That model gives security teams a single place to enforce least-privilege policies, a single revocation point when a tool is compromised, and an audit log connecting access to actual operational need. Obot MCP Gateway is built to provide exactly that.

Vercel’s guidance is the reactive baseline. A governed MCP gateway architecture is how you avoid needing it again.

The Weakest Link Is Already Connected

The Vercel incident won’t be the last one shaped like this. As AI tooling proliferates across enterprise environments, the attack surface grows with every OAuth grant an employee approves during onboarding.

The organizations that come out ahead won’t be the ones that restrict AI adoption. They’ll be the ones that govern it structurally:

- Centralized visibility into what every tool can reach

- Least-privilege access enforced at connection time

- A single revocation point when something goes wrong.

Routing AI tool connections through a governed control plane doesn’t slow teams down; it makes the permissions they’re already granting auditable and recoverable. That’s the difference between a contained incident and a cascading one.

MCP security isn’t a future concern for when AI adoption matures. The Vercel breach is the case study that proves it’s a present requirement.